Align AI Capability With Operational Reality

Why: AI projects fail not from lack of ambition, but from misaligned infrastructure. Before scaling, organizations must honestly assess if their operations can support what their models promise to deliver.

Who: Enterprise leaders, AI strategists, and operations teams preparing to move AI from pilot to production. Built for decision-makers who need clarity on readiness before committing resources to scale.

What: A practical AI readiness scorecard that evaluates organizational foundations. It identifies structural gaps between AI capability and operational reality, helping teams assess whether they’re truly prepared to scale or need groundwork first.

AI Readiness Scorecard

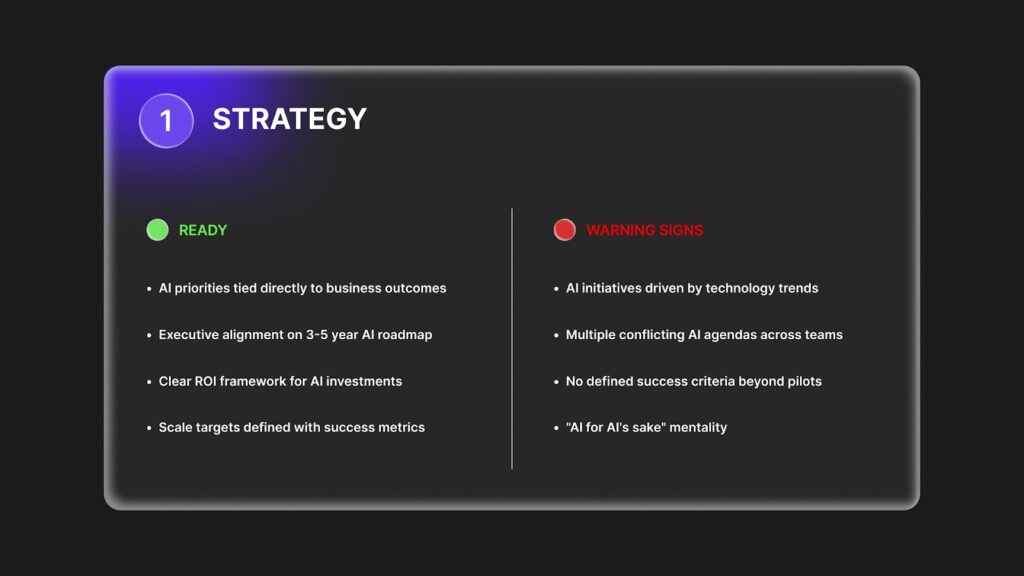

1. STRATEGY

READY

- AI priorities tied directly to business outcomes

- Executive alignment on 3-5 year AI roadmap

- Clear ROI framework for AI investments

- Scale targets defined with success metrics

WARNING SIGNS

- AI initiatives driven by technology trends

- Multiple conflicting AI agendas across teams

- No defined success criteria beyond pilots

- “AI for AI’s sake” mentality

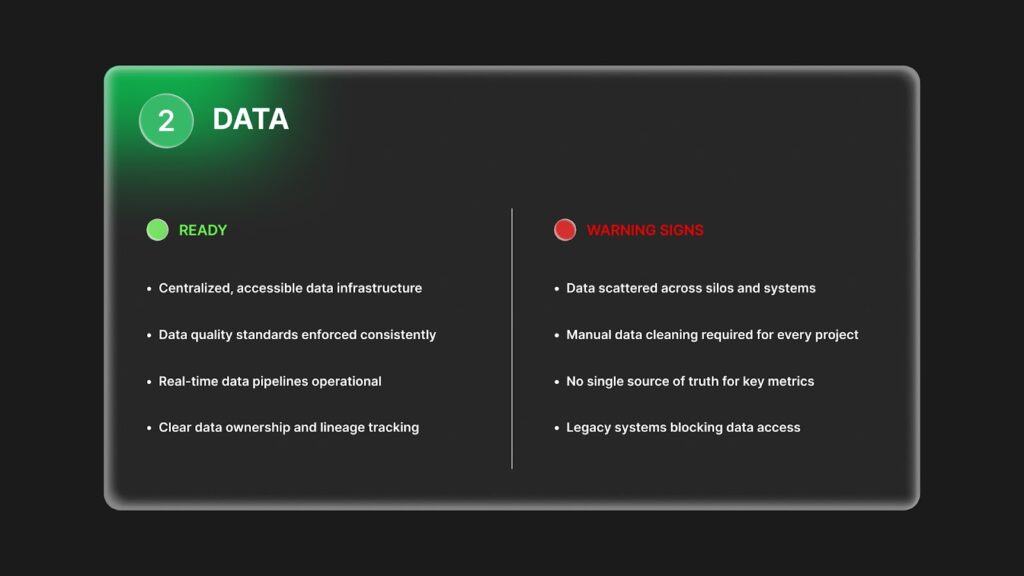

2. DATA

READY

- Centralized, accessible data infrastructure

- Data quality standards enforced consistently

- Real-time data pipelines operational

- Clear data ownership and lineage tracking

WARNING SIGNS

- Data scattered across silos and systems

- Manual data cleaning required for every project

- No single source of truth for key metrics

- Legacy systems blocking data access

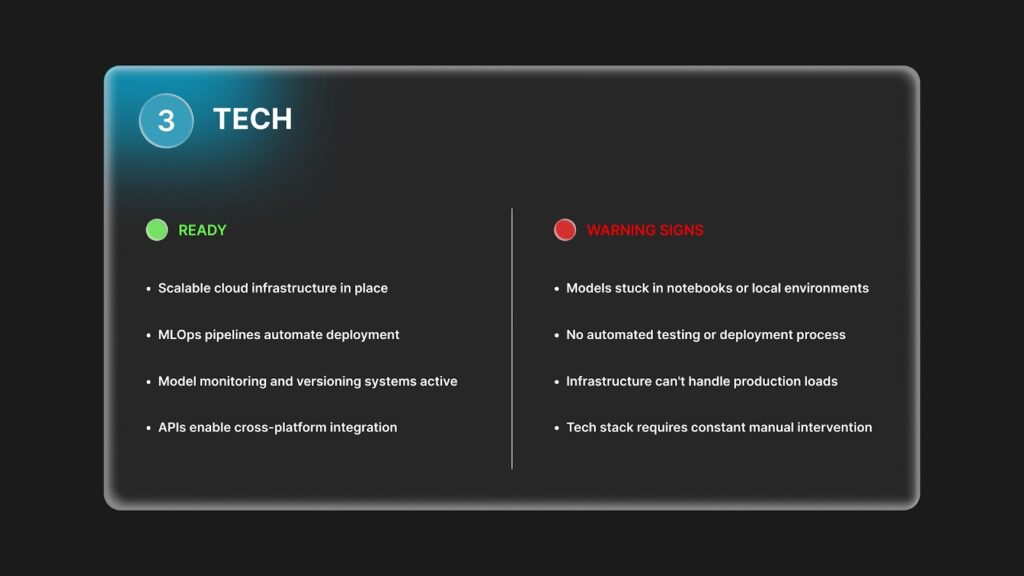

3. TECH

READY

- Scalable cloud infrastructure in place

- MLOps pipelines automate deployment

- Model monitoring and versioning systems active

- APIs enable cross-platform integration

WARNING SIGNS

- Models stuck in notebooks or local environments

- No automated testing or deployment process

- Infrastructure can’t handle production loads

- Tech stack requires constant manual intervention

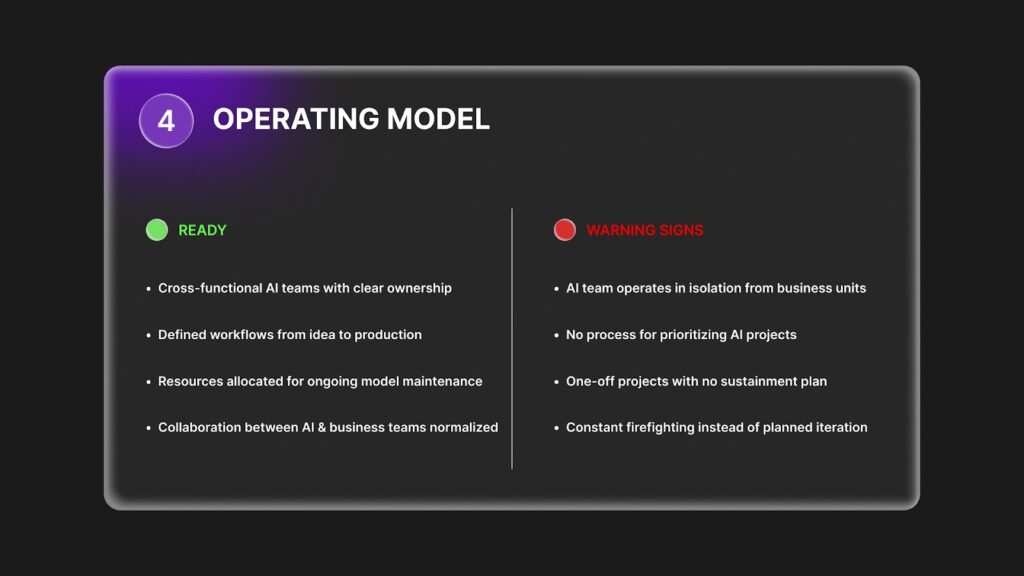

4. OPERATING MODEL

READY

- Cross-functional AI teams with clear ownership

- Defined workflows from idea to production

- Resources allocated for ongoing model maintenance

- Collaboration between AI and business teams normalized

WARNING SIGNS

- AI team operates in isolation from business units

- No process for prioritizing AI projects

- One-off projects with no sustainment plan

- Constant firefighting instead of planned iteration

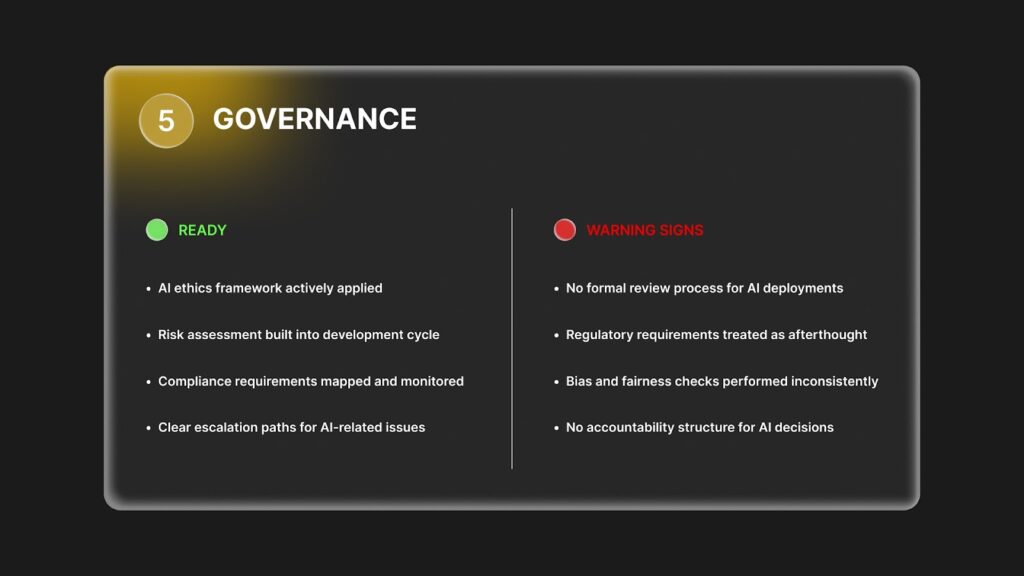

5. GOVERNANCE

READY

- AI ethics framework actively applied

- Risk assessment built into development cycle

- Compliance requirements mapped and monitored

- Clear escalation paths for AI-related issues

WARNING SIGNS

- No formal review process for AI deployments

- Regulatory requirements treated as afterthought

- Bias and fairness checks performed inconsistently

- No accountability structure for AI decisions

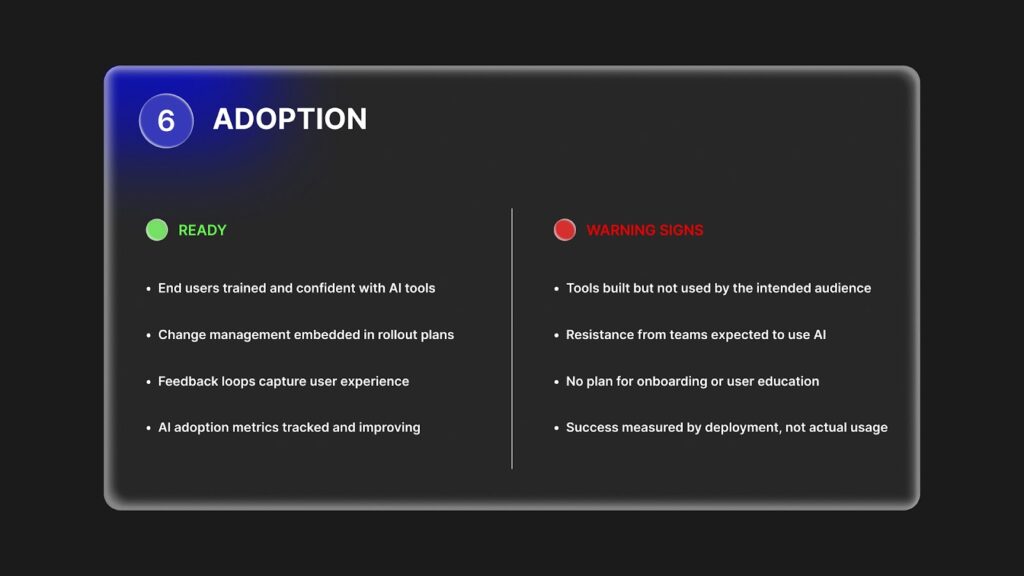

6. ADOPTION

READY

- End users trained and confident with AI tools

- Change management embedded in rollout plans

- Feedback loops capture user experience

- AI adoption metrics tracked and improving

WARNING SIGNS

- Tools built but not used by the intended audience

- Resistance from teams expected to use AI

- No plan for onboarding or user education

- Success measured by deployment, not actual usage

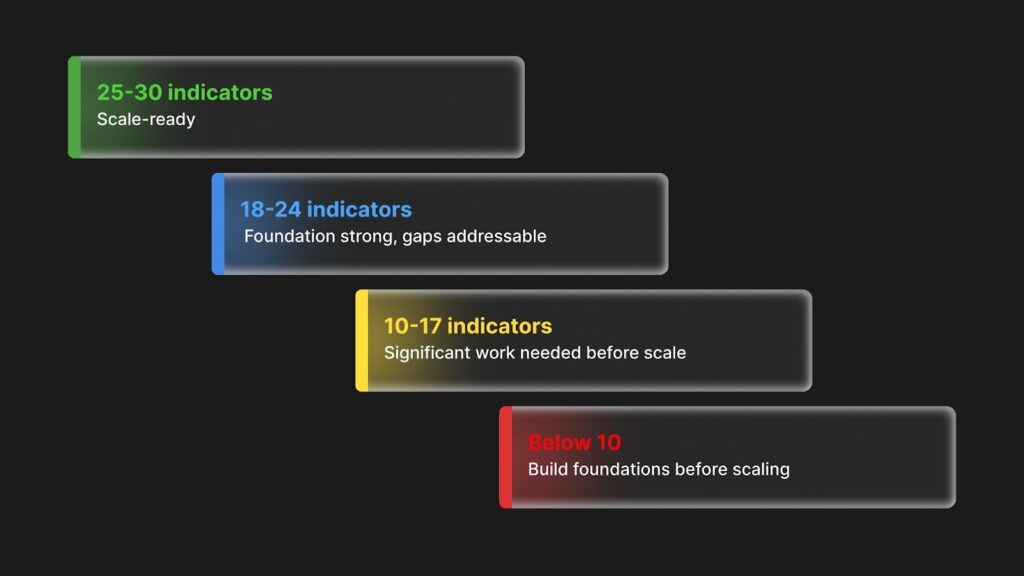

SCORING YOUR READINESS

Count your “READY” indicators across all 6 dimensions:

- 25-30 indicators: Scale-ready

- 18-24 indicators: Foundation strong, gaps addressable

- 10-17 indicators: Significant work needed before scale

- Below 10: Build foundations before scaling

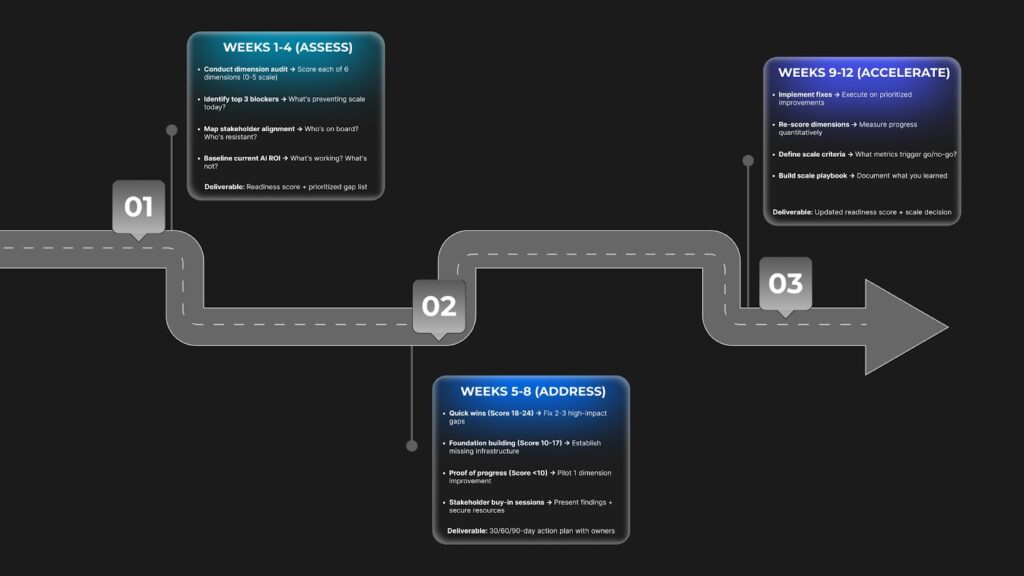

THE 90-DAY READINESS ROADMAP

PHASE 1: WEEKS 1-4 (ASSESS)

- Conduct dimension audit → Score each of 6 dimensions (0-5 scale)

- Identify top 3 blockers → What’s preventing scale today?

- Map stakeholder alignment → Who’s on board? Who’s resistant?

- Baseline current AI ROI → What’s working? What’s not?

Deliverable: Readiness score + prioritized gap list

PHASE 2: WEEKS 5-8 (ADDRESS)

- Quick wins (Score 18-24) → Fix 2-3 high-impact gaps

- Foundation building (Score 10-17) → Establish missing infrastructure

- Proof of progress (Score <10) → Pilot 1 dimension improvement

- Stakeholder buy-in sessions → Present findings + secure resources

Deliverable: 30/60/90-day action plan with owners

PHASE 3: WEEKS 9-12 (ACCELERATE)

- Implement fixes → Execute on prioritized improvements

- Re-score dimensions → Measure progress quantitatively

- Define scale criteria → What metrics trigger go/no-go?

- Build scale playbook → Document what you learned

Deliverable: Updated readiness score + scale decision

KEY METRICS TO TRACK:

- Readiness score improvement (target: +5-10 points)

- Time to deploy AI models (target: 50% reduction)

- Stakeholder alignment score (target: 80%+ buy-in)

- AI project success rate (target: >70%)

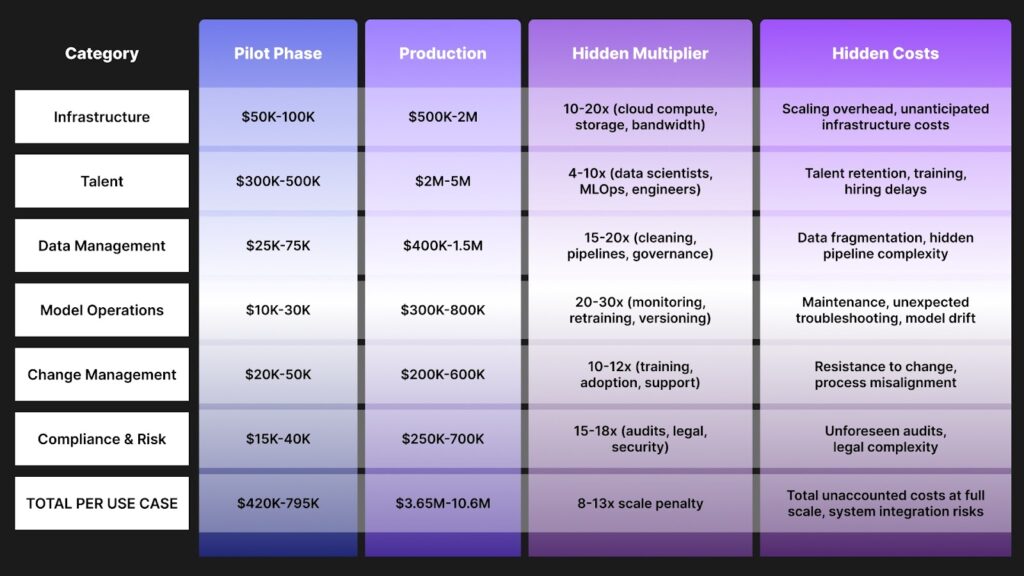

THE TRUE COST OF SCALING AI

Per AI Use Case at Enterprise Scale (Annual)

| Category | Pilot Phase | Production Scale | Hidden Multiplier | Hidden Costs |

| Infrastructure | $50K-100K | $500K-2M | 10-20x (cloud compute, storage, bandwidth) | Scaling overhead, unanticipated infrastructure costs |

| Talent | $300K-500K | $2M-5M | 4-10x (data scientists, MLOps, engineers) | Talent retention, training, hiring delays |

| Data Management | $25K-75K | $400K-1.5M | 15-20x (cleaning, pipelines, governance) | Data fragmentation, hidden pipeline complexity |

| Model Operations | $10K-30K | $300K-800K | 20-30x (monitoring, retraining, versioning) | Maintenance, unexpected troubleshooting, model drift |

| Change Management | $20K-50K | $200K-600K | 10-12x (training, adoption, support) | Resistance to change, process misalignment |

| Compliance & Risk | $15K-40K | $250K-700K | 15-18x (audits, legal, security) | Unforeseen audits, legal complexity |

| TOTAL PER USE CASE | $420K-795K | $3.65M-10.6M | 8-13x scale penalty | Total unaccounted costs at full scale, system integration risks |

REALITY CHECK: Most enterprises underestimate total AI costs by 2-3x in year one

COST OPTIMIZATION LEVERS

Where smart enterprises save 30-50% on scaling costs:

- Start with 2-3 high-ROI use cases → Avoid spreading budget thin

- Reuse MLOps infrastructure → 60% cost reduction on subsequent models

- Invest in data foundations early → Prevents 10x rework costs later

- Automate model operations → Cuts ongoing maintenance by 40%

- Build internal talent pipelines → Reduces hiring premium by 25%

- Use existing tech stack → Avoids vendor lock-in and integration costs

BENCHMARK: Best-in-class enterprises achieve AI at $2-4M per use case vs. industry average of $6-10M

COST READINESS CHECKLIST

Before committing budget to scale, ask:

- Have we budgeted for an 8-13x cost increase from pilot to production?

- Do we have executive buy-in for a multi-year investment (3-5 years)?

- Have we accounted for hidden costs (technical debt, compliance, retention)?

- Is our readiness score 18+ to avoid the 2-3x inefficiency penalty?

- Do we have clear ROI targets (minimum 200% over 3 years)?

- Have we ring-fenced a budget for foundation building if needed?

FINAL REALITY CHECK: If you can’t confidently check 5+ boxes, you’re not ready to commit scale-level budget

Codewave is a UX first design thinking & digital transformation services company, designing & engineering innovative mobile apps, cloud, & edge solutions.