Introduction

Plant diseases destroy up to 40% of global crop production annually, costing the world economy over $220 billion each year, according to the FAO Plant Production and Protection Division. For staple crops, the toll is staggering: rice loses 30% of potential yield, maize 22.5%, and wheat 21.5% to pests and diseases, based on a landmark 2019 study in Nature Ecology & Evolution. With 733 million people facing hunger globally, every percentage point of crop loss directly threatens food security.

Traditional detection methods cannot keep pace. Visual inspection by agronomists is subjective, varies by expertise, and cannot scale across thousands of acres.

Laboratory testing delivers accuracy but takes 11–21 days for routine diagnoses at facilities like Texas A&M's Plant Disease Diagnostic Laboratory. That delay is fatal when diseases spread exponentially. By the time symptoms are visible or lab results return, infection has often reached neighboring plants, making containment costly or impossible.

AI changes that equation. This article explains how methods like Convolutional Neural Networks, Vision Transformers, and Generative AI detect crop diseases from images with over 90% accuracy, often in milliseconds, and how farms move from concept to deployed system.

TLDR

- AI models detect crop diseases from leaf images with 90–99% accuracy, often delivering diagnoses in under 30 milliseconds

- Early detection enables targeted interventions before disease spreads, reducing crop loss and cutting pesticide use by 30–87%

- Detection pipelines use smartphone cameras, drones, or IoT sensors to capture images and deliver real-time treatment alerts

- Transfer learning, GANs, and lightweight frameworks address key barriers like data scarcity, climate variability, and edge deployment constraints

Why Traditional Crop Disease Detection Falls Short

Two approaches dominate today: visual inspection by agronomists and laboratory-based diagnostic testing. Both fail at scale.

Visual inspection depends entirely on the skill level of the expert conducting the scouting. Symptoms are often subtle, especially in early stages when intervention would be most effective. Even experienced agronomists cannot survey thousands of hectares quickly or consistently, and fatigue or lighting conditions introduce subjectivity. According to a 2020 study in Frontiers in Plant Science, extension agents achieved just 40–58% accuracy diagnosing cassava diseases, while farmers managed only 18–31%.

Laboratory testing is accurate but time-intensive. Samples must be collected, transported, and processed. Turnaround times average 11–21 days during peak seasons — an eternity when bacterial or fungal pathogens double their spread every few days.

Research describes traditional methods as slow, labor-intensive, and dependent on expert knowledge, limiting scalability precisely when rapid, widespread monitoring is most needed.

Both approaches share the same fatal flaw: by the time humans notice symptoms or labs confirm a diagnosis, disease has already spread beyond containment. Farmers are left choosing between treating entire fields prophylactically — wasting chemicals and money — or waiting for visible damage and sacrificing yield. Neither is acceptable when margins are thin and regulations tighten.

The core limitations come down to three compounding problems:

- Speed: Lab turnarounds and scouting schedules can't match pathogen spread rates

- Scale: Manual scouting becomes economically impractical on farms spanning thousands of hectares

- Subjectivity: Human diagnosis accuracy drops sharply without specialist training

Large commercial farms spanning thousands of hectares make manual scouting economically impractical. Labor costs alone can exceed the value of early intervention, forcing growers to rely on scheduled spraying rather than targeted, responsive treatment.

Core AI Methods for Crop Disease Detection

Convolutional Neural Networks (CNNs)

CNNs analyze plant leaf images by learning hierarchical visual features through multiple convolutional layers. Early layers detect edges, colors, and textures. Deeper layers recognize patterns like lesion shapes, chlorosis distribution, or necrotic spots. The final layers classify the image as healthy or diseased, and if diseased, identify the specific pathogen class.

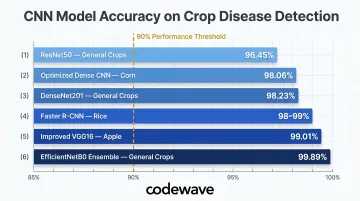

CNNs dominate crop disease detection because they scale efficiently and learn directly from labeled image data without manual feature engineering. Popular architectures applied to agriculture include:

- ResNet50: Achieved 96.45% accuracy on PlantVillage (a benchmark dataset of 54,305 images covering 38 disease classes across 14 crops)

- DenseNet201: Reached 98.23% accuracy

- EfficientNetB0 ensembles: Delivered 99.89% accuracy on PlantVillage

Real-world crop-specific results from a 2023 Plant Pathology Journal study include:

- Improved VGG16 on apple leaf disease: 99.01%

- Optimized Dense CNN on corn leaf: 98.06%

- Faster R-CNN on rice blast, brown spot, and hispa diseases: 98–99% detection accuracy

These architectures have been validated on tomato, potato, maize, grape, soybean, and other staple crops, consistently reaching the 90–99% accuracy range when trained on high-quality, diverse datasets.

Transfer Learning Models

Transfer learning allows practitioners to fine-tune pre-trained models—originally trained on massive image libraries like ImageNet—on much smaller crop disease datasets. Instead of training a CNN from scratch (which demands tens of thousands of labeled images), you adapt a model that already understands edges, textures, and shapes to recognize plant-specific symptoms.

When to use transfer learning:

- Limited labeled disease images available (fewer than 5,000 per class)

- Budget or time constraints prevent large-scale data collection

- Rapid prototyping needed to validate feasibility

When to train from scratch:

- Large, crop-specific annotated datasets available (10,000+ images per class)

- Unique imaging conditions (spectral bands, satellite resolution) differ from natural RGB images

- Maximum accuracy required for production deployment

Transfer learning cuts training time from weeks to days and lowers data requirements by 50–80%—enough to make production-ready models viable on budgets that would rule out full training runs.

Vision Transformers (ViT)

While CNNs analyze images through localized patches, Vision Transformers use attention mechanisms to evaluate relationships across the entire image simultaneously. This makes ViTs particularly effective at detecting subtle, early-stage symptoms distributed across the leaf rather than concentrated in one spot.

A 2025 study in Scientific Reports demonstrated that the PLA-ViT model achieved 99.1% accuracy with just 12 milliseconds inference time—outperforming CNN-based models that ranged from 95.2% to 97.5% accuracy with longer inference times.

Key ViT advantages:

- Better at capturing spatial relationships between distant leaf regions

- More effective at detecting diseases with diffuse symptoms (viral infections, nutrient deficiencies)

- Handles multi-modal inputs—satellite imagery, weather conditions, soil sensor readings—to predict disease risk that visual inspection alone would miss

Trade-offs: ViTs typically require more training data than CNNs and demand more computational resources. However, when sufficient data exists, they often surpass CNNs in detecting early-stage infections before symptoms become obvious to human scouts.

Generative AI and GANs

Generative Adversarial Networks (GANs) directly address agriculture's most persistent bottleneck: lack of training data. Collecting labeled images across every disease stage, lighting condition, and crop variety is expensive and slow. GANs generate labeled synthetic images of diseased plants at each disease stage, expanding datasets without additional fieldwork.

A 2020 study in Frontiers in Plant Science showed that using WGAN-GP (Wasserstein GAN with Gradient Penalty) to augment just 873 training images improved classification accuracy from 60.4% to 84.78%—a 24.4% absolute gain. Classic data augmentation (rotation, flipping, brightness adjustment) reached 80.57%, but GAN-generated images added another 4.21% on top.

Other GenAI roles in disease detection:

- Anomaly detection: Train a model on healthy plant images; flag deviations as potential disease

- Cross-modal synthetic data: Generate hyperspectral or infrared training images to complement RGB datasets, improving model robustness across sensor types

Important caveat: GANs provide the greatest benefit when baseline datasets are very small. A 2025 study in Heliyon found that GAN improvements were not statistically significant when training sets already exceeded several thousand images per class. Reserve GANs for datasets under a few thousand images per class—not as a default augmentation step.

How an AI Crop Disease Detection Pipeline Works End-to-End

Step 1 — Data Capture

Images are captured through one of three methods:

- Smartphone cameras let farmers photograph leaves in-field via a mobile app. Good lighting and close-up shots (typically 10–20 cm from the leaf surface) are required for reliable results.

- Drone/UAV imaging uses RGB or multispectral cameras to survey fields at 10–50 meters altitude, capturing thousands of images per flight — well-suited for large-scale monitoring.

- IoT-connected sensors — fixed cameras mounted near crops — capture images at scheduled intervals, supporting continuous monitoring without human intervention.

Resolution requirements: Minimum 1–2 megapixels for smartphone/IoT; 5+ megapixels for drone imagery, sufficient to detect early symptoms when images are cropped to individual plants.

Step 2 — Preprocessing

Before reaching the model, raw images go through four standardization steps:

- Resizing to uniform dimensions (typically 224×224 or 256×256 pixels)

- Color normalization to reduce brightness and contrast variation across different lighting conditions

- Background suppression using segmentation masks to remove soil, sky, and other non-plant elements

- Data augmentation (training only) — rotation, flipping, zoom, and brightness shifts — to improve generalization

These steps ensure the model receives consistent input regardless of capture conditions.

Step 3 — Model Inference

Once preprocessed, the image passes through three inference stages:

- Feature extraction: Convolutional or attention layers identify visual patterns — lesions, discoloration, texture changes

- Classification: The model assigns the image to a disease category (or healthy) based on learned patterns

- Confidence score: Outputs a probability (e.g., 87% confidence of bacterial spot on tomato)

Optimized models complete inference in 12–30 milliseconds on mobile devices or edge hardware, making real-time diagnosis practical in the field.

Step 4 — Output and Alerting

Inference results are translated into three types of actionable output:

- Disease type — the specific pathogen identified (e.g., late blight, powdery mildew, cassava mosaic virus)

- Severity stage — early, moderate, or advanced infection

- Recommended action — targeted fungicide application, row quarantine, irrigation adjustment, or plant removal

Outputs appear via mobile app dashboard or web interface. For drone-captured images, GPS coordinates guide field crews directly to affected areas.

Step 5 — Edge and Cloud Deployment

Edge deployment runs models on mobile devices, NVIDIA Jetson Nano, or Raspberry Pi, offering three core advantages in rural settings:

- Operates offline where connectivity is unreliable

- Reduces latency to under 1 second from image capture to diagnosis

- Frameworks like TensorFlow Lite compress models to 5–20 MB without major accuracy loss

- Example: A 2026 study in Agriculture deployed YOLOv8 + ResNet-18 on Raspberry Pi 5, achieving 862 ms end-to-end latency and 90% detection accuracy for approximately $150 in hardware costs

Cloud-based deployment suits farms with reliable connectivity. It supports centralized reporting, periodic model updates, and aggregated analytics across multiple farms — and allows larger CNNs or Vision Transformers that exceed edge device capabilities.

Most production systems use a hybrid approach: edge inference for real-time alerts, with periodic cloud syncing for data aggregation and model retraining.

Real-World Benefits and Impact of AI Detection

Quantified yield protection: Cassava mosaic disease (CMD) causes 20–95% fresh root yield reductions, costing the global economy $1.9–$2.7 billion annually, according to a 2024 study in Agriculture. Early detection enables farmers to select disease-free planting material and remove infected plants before the virus spreads.

The PlantVillage Nuru app—a real-world AI detection tool—achieved 93% accuracy for CMD diagnosis using a six-leaf inspection protocol, far outperforming extension agents (40–58% accuracy) and farmers (18–31% accuracy). By catching infections before they become catastrophic, AI-assisted detection directly prevents the severe yield losses documented above.

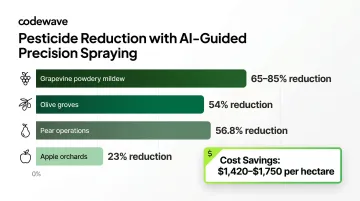

Pesticide reduction: Precision disease detection enables targeted treatment rather than blanket prophylactic spraying. Research on camera-based precision spraying systems shows:

- Site-specific disease spraying reduced pesticide use by 65–85% for grapevine powdery mildew

- Apple orchards achieved 23% pesticide reduction

- Olive groves reduced application by 54%

- Pear operations cut pesticide use by 56.8%

Conventional sprayers deposit less than 30% of pesticides on target, wasting 60–70% to off-target drift and ground loss. Targeted treatment based on AI detection saves $1,420–$1,750 per hectare in pesticide costs alone—approximately one-third of total production costs for tree fruit growers.

Downstream sustainability benefits: Reduced chemical inputs lower environmental load, decrease contamination of soil and water, and minimize harm to beneficial insects. Farmers save money on inputs while meeting increasingly strict environmental regulations.

Translating these benefits from pilot to field scale is where implementation quality matters. Codewave works with agriculture clients to build custom AI detection pipelines—connecting mobile and drone data capture to alert systems that deliver actionable insights at the farm level, so accuracy in the lab becomes measurable crop protection in practice.

Key Challenges and Practical Considerations

Data quality and labeling burden: Building a reliable detection model requires large, accurately labeled datasets covering different crops, disease stages, lighting conditions, and geographies. A single crop may have 10–20 distinct diseases, each requiring 500–2,000 labeled images per stage (early, moderate, severe) across varied lighting, angles, and backgrounds. Multiply this by multiple crops, and the annotation workload becomes the hardest part of any agri-AI project.

Strategies to address data challenges:

- Use transfer learning to reduce data requirements by 50–80%

- Deploy GANs to generate synthetic images when field data is limited

- Partner with agronomists or agricultural universities for expert annotation

- Crowdsource labeling through farmer cooperatives, with expert validation for quality control

Model generalization and environmental variability: A model trained on tomato leaf images in California may perform poorly on tomatoes grown in India due to differences in climate, lighting, humidity, and disease symptom presentation. Research shows accuracy drops approximately 14 percentage points when models trained on lab-controlled datasets (99%+ accuracy) are deployed in real field conditions (85% F1-score), according to a 2025 study in Scientific Reports.

Key factors causing generalization failure:

- Variations in lighting, background, and leaf orientation in uncontrolled environments

- Geographic and climate differences affecting symptom appearance

- Dataset-specific optimization leading to poor cross-crop performance

To close this gap:

- Test models across varied field conditions before deployment

- Collect region-specific training data for local fine-tuning

- Validate performance across multiple seasons and climates before scaling

Integration and adoption barriers: Getting AI tools into farmers' hands requires more than accurate models. Connectivity constraints in rural areas demand offline-capable edge deployment. Mobile interfaces must be simple enough for users with limited technical literacy—clear visual outputs (green/yellow/red alerts) and specific action steps outperform complex dashboards every time.

Trust is the other barrier. Most growers won't act on an AI alert until they've seen it perform consistently across at least one or two full growing seasons. Running AI diagnoses alongside agronomist oversight during initial rollout gives farmers a reference point that builds confidence in automated recommendations over time.

Frequently Asked Questions

How is AI used in plant disease detection?

AI analyzes images of plant leaves using deep learning models like CNNs or Vision Transformers to identify visual symptoms of disease, classify the disease type, assess severity, and recommend treatment—in real time via smartphone or drone-captured imagery.

Which AI is best for disease diagnosis?

No single "best" AI exists. CNNs are the most widely deployed, achieving 90–99% accuracy on benchmark datasets, while transfer learning models (ResNet, EfficientNet) work well when labeled data is limited. Vision Transformers detect subtle early-stage symptoms across the full image but require significantly more training data.

What AI-based tool helps detect crop diseases using images?

Tools range from custom CNN models trained on datasets like PlantVillage to mobile apps like PlantVillage Nuru, which runs a deep learning model on-device to classify leaf diseases from a smartphone photo taken in the field.

Can AI detect crop diseases in real time?

Yes. Optimized models deployed on mobile devices or edge hardware like Raspberry Pi or NVIDIA Jetson process and classify leaf images in 12–30 milliseconds, delivering a disease diagnosis and recommended action immediately in the field without needing a cloud connection.

What data is needed to train an AI crop disease detection model?

Training requires 500–2,000 labeled images per disease class, covering healthy and diseased crops across varying severity stages, lighting conditions, and crop varieties. Data augmentation and GAN-generated synthetic images can expand limited datasets when real field data is scarce.

How accurate is AI in detecting plant diseases?

Accuracy varies by model, crop type, and dataset quality. State-of-the-art deep learning models consistently achieve 90–99% accuracy on benchmark datasets like PlantVillage, with leading architectures reaching 98–99% in controlled conditions. Real-world field performance typically drops to 85–95% due to environmental variability, lighting differences, and symptom variation across regions—but this still far exceeds human expert accuracy (40–58% for trained agronomists, 18–31% for farmers).