The tension is clear: legal work demands precision, defensibility, and professional judgment, yet hours vanish into repetitive research, document review, and compliance tracking. Agentic AI addresses this gap head-on. This article covers what agentic AI is, why it fits legal practice, real use cases already in production, and how to adopt it responsibly.

TLDR:

- Agentic AI autonomously plans, executes, and self-corrects multi-step legal workflows—not just generating text, but completing end-to-end tasks

- Legal work's structured rules and contextual complexity make it ideal for human-in-the-loop AI systems

- Early adopters report 60%+ time savings on contract review, 25% faster legal research, and $1.2M+ in annual benefits

- Trust requires audit trails, citation traceability, and purpose-built legal AI—not general internet-trained models

- Start with low-risk pilots, validate outputs rigorously, and build AI fluency alongside technical deployment

What Is Agentic AI—And How Is It Different from Generative AI?

Agentic AI refers to AI systems that autonomously plan, break down multi-step tasks, execute them, self-correct, and loop back for human review. Unlike generative AI (GenAI), which answers prompts with generated content, agentic AI pursues goals. According to research grounded in NIST AI RMF 1.0, generative AI "excels primarily at content production," while agentic AI describes "autonomous systems capable of planning, reasoning, and executing multi-step workflows with minimal human intervention."

Here's the practical difference: GenAI drafts a clause when you ask. Agentic AI reviews an entire contract, flags deviations from standard terms, cross-references precedents, summarizes risk, and delivers a complete analysis—all in one autonomous workflow.

How Agentic AI Works

Agentic AI is powered by large language models (LLMs) combined with:

- Access to databases, document repositories, and external platforms

- Planning logic that breaks complex requests into executable subtasks

- Memory that retains context across the full workflow without re-prompting

This architecture allows agents to work across documents, legal databases, and case management platforms without constant human re-prompting.

Multi-Agent Systems

Professional-grade agentic AI often deploys multi-agent systems—coordinated networks where specialized sub-agents handle distinct tasks. In a contract review workflow, for example:

- One agent researches case law

- Another validates citations

- A third drafts the memo

- A fourth cross-checks consistency

Each agent checks the work of the others, which means errors surface before output reaches the attorney—not after.

Agentic AI Still Requires Human Oversight

Agentic AI is not "set and forget." Well-designed agents know when to pause, flag ambiguity, and hand off to the attorney before proceeding. That checkpoint—built into the workflow, not bolted on afterward—is what makes agentic AI viable in legal practice, where every decision carries professional accountability.

Why Agentic AI Is a Natural Fit for Legal Work

Legal work has a dual nature: it's both highly structured (governed by rules, precedents, statutes) and deeply contextual (every matter has unique facts and consequences). This combination makes it ideal for human-in-the-loop AI.

The Repetitive Burden

Legal professionals spend significant hours on high-volume, repetitive tasks:

- Research compilation and case law review

- First-pass document review

- Contract clause extraction and comparison

- Deadline tracking and regulatory monitoring

McKinsey research estimates that approximately 22% of a lawyer's job and 35% of a law clerk's job can be automated using current AI. Meanwhile, lawyers bill only 30-40% of their total working hours—meaning substantial time is lost to non-billable administrative work. Agentic AI absorbs this cognitive load without replacing professional judgment.

Compounding Value Over Time

When agentic AI is trained on firm-specific playbooks, clause libraries, and institutional knowledge, it converts tacit expertise into reusable firm infrastructure — the kind that doesn't walk out the door when a senior associate leaves. Over time, this creates compounding returns:

- Junior attorneys ramp faster by querying accumulated precedent and playbooks

- Repeat client matters benefit from pre-trained clause preferences and risk thresholds

- Institutional knowledge becomes auditable, searchable, and actionable — not siloed in email threads

Key Use Cases of Agentic AI in Legal Practice

Agentic AI use cases span from research to compliance. These aren't future scenarios—they're in production at leading firms today. According to Thomson Reuters Institute's 2025 GenAI report, legal professionals already use GenAI for document review (74%), legal research (73%), and document summarization (72%).

Legal Research and Memo Drafting

An agentic AI can receive a complex legal question, generate a multi-step research plan, search case law databases and statutes, synthesize findings, and produce a sourced research memo—all with citations lawyers can verify.

Unlike keyword search, agentic systems apply the law to specific fact patterns and proactively surface counterarguments—delivering insight, not just data. Thomson Reuters has run over 1,000,000 tests on CoCounsel, their legal AI assistant, with 1,500+ automated tests nightly under attorney oversight. Outputs are rejected if they contain material omissions, factual errors, or hallucinations.

A Forrester Total Economic Impact study found Lexis+ AI delivers $1.2M in benefits for corporate legal departments, with 284% ROI over three years and payback under 6 months. Key findings:

- 25% fewer hours on legal inquiries ($574,200 in recovered value)

- 50% paralegal time savings on administrative tasks

- Up to 13% reduction in outside counsel work ($602,500 in savings)

Contract Review and Due Diligence

Agentic AI can review thousands of contracts in M&A scenarios: extracting key clauses, flagging deviations from standard terms, identifying risk, and cross-referencing inconsistencies across documents.

What once took associate teams days or weeks now takes a fraction of the time. A techUK case study on Luminance AI documented:

- Over 60% reduction in contract review time

- Team manages 50+ contracts/day

- Over 90% of legal work kept in-house

- Data extraction reduced from hours to minutes

The "AI in M&A Dealmaking 2026" study (400 senior M&A professionals) found nearly 9 in 10 deal teams have moved beyond pilot stage, 80%+ use AI in early-stage diligence, and more than one-third report saving 21-30% of time on diligence tasks.

Case Preparation and Litigation Support

Agentic AI supports litigation through:

- Building case timelines from document sets

- Identifying patterns in judicial reasoning across precedents

- Detecting evidentiary weaknesses

- Distributing research tasks based on team expertise

Argument gap detection: Agentic systems surface counterarguments opposing counsel is likely to raise, helping litigators prepare more defensible positions.

Compliance Monitoring and Regulatory Analysis

Agentic AI can continuously monitor regulatory updates across jurisdictions and flag conflicts between proposed legislation and existing frameworks. For in-house counsel tracking obligations across multiple states or countries—say, simultaneous compliance with CCPA, GDPR, and sector-specific financial rules—this reduces the manual review burden that typically requires dedicated compliance staff or expensive outside counsel retainers.

Trust, Accuracy, and Ethical Guardrails in Legal AI

Legal work does not tolerate hallucination. A fabricated citation or misapplied precedent can expose a firm to malpractice liability. Professional-grade legal AI must anchor to authoritative, curated sources—not general internet data.

What "Trustworthy" Legal AI Looks Like

- Audit trails for every output

- Citation traceability to verified legal databases

- Transparent reasoning plans showing how conclusions were reached

- Built-in verification steps that flag uncertainty or gaps

Lawyers must be able to review and validate outputs in minutes, not hours — which means the tool's reasoning must be visible, not buried.

Ethical and Professional Obligations

ABA Formal Opinion 512 (July 2024) establishes the ethical paradigm for lawyers using GenAI under Model Rules:

- Competence (Rule 1.1): Lawyers must maintain technological competence — "ignorance of ethical considerations is not acceptable"

- Confidentiality (Rule 1.6): Client data fed into AI tools remains protected under privilege obligations

- Supervisory duties (Rule 5.3): AI functions as a "nonlawyer assistant" — attorneys bear final responsibility for its output

- Candor and reasonable fees: AI-assisted work still requires honest representations to tribunals and proportionate billing

These obligations don't disappear when AI enters the workflow — they transfer to how firms select and supervise their tools. Purpose-built legal AI with robust security infrastructure isn't optional; it's a professional requirement.

The Cost of Getting It Wrong

In Mata v. Avianca (2023), Judge P. Kevin Castel sanctioned attorneys Peter LoDuca and Steven Schwartz for a ChatGPT-written brief containing fabricated court opinions. Sanctions: $5,000 fine each. The judge noted "there is nothing inherently improper about using a reliable artificial intelligence tool" but attorneys have a "gatekeeping role" for accuracy.

How Legal Teams Should Build AI Fluency

AI fluency for legal professionals means knowing what AI can and cannot do — how to prompt it effectively, evaluate outputs critically, and decide when to override it. Coding or model-building isn't the point. Sound judgment is.

McKinsey research (April 2026) shows general AI usage surged from 30% (2023) to 76% (2025) of employees. Yet Thomson Reuters found that 64% of legal professionals received no GenAI training. Only 31% say training is even available.

Critical Competencies

Legal professionals need to develop:

- Framing the right research questions for AI systems

- Validating citations and reasoning chains

- Designing workflows that balance autonomy with defensibility

- Knowing when NOT to rely on automation

These competencies don't develop overnight — they build through deliberate, low-stakes practice before teams take on more complex AI-assisted work.

Practical Learning Path

Start with low-risk, standalone tasks where errors are catchable and consequences are limited:

- Deposition summaries — AI drafts, attorney reviews; good for building prompt discipline

- First-pass document review — tests AI pattern recognition while keeping human sign-off in the loop

- Research memo outlines — validates AI's ability to structure legal arguments before it touches final work product

Once teams develop consistent output quality in these areas, they're ready to integrate AI into higher-stakes workflows without compromising defensibility.

How to Get Started with Agentic AI in Your Legal Practice

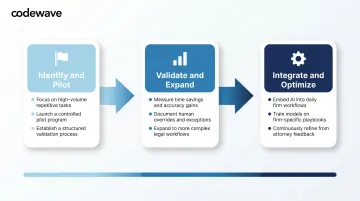

Phased Adoption Approach

Phase 1: Identify and pilot

- Start with high-volume, repetitive tasks where errors are recoverable

- Run a controlled pilot with defined scope and clear success metrics

- Establish a validation process before outputs reach clients

Phase 2: Validate and expand

- Measure time savings, accuracy improvements, and cost reductions

- Document what works and what needs human override

- Expand to more complex workflows once the team has built trust

Phase 3: Integrate and optimize

- Embed AI into daily workflows

- Train on firm-specific playbooks and clause libraries

- Continuously refine based on user feedback

Choose Purpose-Built Legal AI

Distinguish professional-grade tools from consumer-grade AI:

- Curated legal repositories (case law, statutes, regulations)

- Security infrastructure for client-privileged data

- Audit trails and citation traceability

- Workflow integration with existing practice management systems

The wrong tool exposes firms to ethics violations, data breaches, and inaccurate work product.

Build Custom Agentic Workflows

Custom agentic workflows, built around a firm's practice areas, clause libraries, and compliance requirements, deliver more value than off-the-shelf tools. Codewave works with legal organizations to design and deploy these systems using an outcome-based model (ImpactIndex™), where progress is tied to measurable results rather than hours billed.

Codewave's work in compliance-heavy industries translates directly to legal practice. Relevant capabilities include:

- AI-driven fraud detection and anomaly flagging

- Automated compliance monitoring across regulatory frameworks

- Intelligent document processing for contracts, filings, and discovery

With experience across 15+ industries, Codewave helps firms move from concept to production-ready systems with fewer detours.

Frequently Asked Questions

How is agentic AI different from generative AI in a legal context?

Generative AI responds to prompts with generated content, while agentic AI pursues multi-step goals autonomously. In legal practice, this means agentic AI can handle research, drafting, cross-checking, and summarization in one continuous workflow — rather than requiring a human to manage each step.

Can agentic AI replace lawyers?

No. Agentic AI is designed to augment, not replace, legal professionals. It handles repetitive, data-heavy tasks so lawyers can focus on judgment, strategy, advocacy, and client relationships—all of which require human expertise that AI cannot replicate.

What are the biggest risks of using agentic AI in legal work?

Top risks include hallucinated citations, use of non-authoritative data sources, client confidentiality exposure, and inadequate human oversight. All can be mitigated by choosing purpose-built legal AI with audit trails and verified source databases.

What legal tasks are best suited for agentic AI right now?

Agentic AI performs best on high-volume, rule-governed tasks that benefit from scale and human review:

- Legal research and memo drafting

- Contract review and clause extraction

- Due diligence in M&A transactions

- Case timeline building

- Regulatory compliance monitoring

How should a law firm evaluate an agentic AI tool before adopting it?

Evaluate tools against four criteria before adopting:

- Draws from curated legal repositories, not general internet data

- Provides citation traceability and full audit trails

- Has security infrastructure designed for client-privileged data

- Integrates with existing firm workflows

Is agentic AI safe for handling confidential client data?

Safety depends entirely on the tool. Professional-grade legal AI should have robust, purpose-built security infrastructure, data residency controls, and no use of client data for model training. Firms must verify these commitments before deployment.